Before You Trust the Output: Three Exercises for Teaching AI Evaluation

Three new exercises from the second year of AI Augmented Legal Research, and how they adapt for existing courses, workshops, and firm trainings

I’m Emily, one of VAILL’s collaborators, and I’m back with a follow-up to my post last spring about the first iteration of AI Augmented Legal Research. The course is now in its second year, and much of the original syllabus has carried over. What I want to write about here are three new activities I built this year that I keep coming back to as I think about what the course is actually for and what I want to teach again come fall.

The premise of the class, then and now, is that tool fluency isn’t the goal. Students who learn an AI tool or platform without first learning to interrogate its output only end up with a faster way to be wrong. My course isn’t designed to get students to fully adopt AI. Students are there to critically evaluate what AI does well, when it needs extra attention, and where it fails, with their human judgment required in each step.

After last year’s class, I wanted to give students one thing they asked for: more practice! That meant scaling back some of the lectures and class speakers to devote more time to practicing skills. The three exercises I created to fill that gap are the ones I’m sharing today:

The Anonymization Audit

The Red Line Challenge

The Firm Policy & Tool Application Exercise

Each of these three exercises targets a different layer of the evaluation process I want students to consider: the individual prompt, the produced output, and the organizational decision that determines what students will be allowed to do at all. All three were designed to surface specific blind spots, and all three did, in ways I think are useful to share. (Plus: they also translate outside the for-credit course context, so they can be adapted to whatever format is available to you at your institution!)

Sanitizing Before You Paste

The Anonymization Audit asks students to sanitize a client email before pasting it into a public AI tool. They receive a draft email loaded with the kinds of identifiers people input without thinking: names, employer details, jurisdictions, procedural specifics, and dates. Their task is to produce a version they would actually be willing to send into a non-enterprise environment, then explain what they removed and why.

The pedagogical purpose is to expose the gap between what students know about confidentiality and what they actually notice in the moment. Sanitizing in the abstract is easy. Sanitizing a realistic email under time pressure surfaces every assumption about what counts as identifying information. The exercise also gets at shadow AI use, which formal firm training rarely addresses — we know attorneys are using tools that firms may not have authorized. Do they know what information to remove when using these unsanctioned tools (probably not)?

This also molds with traditional research skills: what information is important to scoping your research question? In reading the email and pulling out what is important for your prompting, you have to be able to issue spot and identify important details that may be important to your research.

Students did well on the traditional identifiers: names, employer details, exact dates. And they didn’t have any ‘formal’ training — they were using just what we’d discussed in class, but nothing approaching any clear “never do this” instructions. Most of them removed that information and generalized it, like “April 3, 2025” to “Spring 2025,” which is the right instinct. Some completely stripped out the location (forgetting jurisdiction), but again, right instinct: they were being extra careful to avoid any sensitive information getting out.

What they almost universally left in were two categories I had not flagged in advance as risks. The first was emotional and mental health details: anxiety, “emotionally overwhelmed,” difficulty sleeping, embarrassment, and isolation. The second was specific workplace dynamics: “largely male department,” exclusion from lunches and breaks, dismissive jokes, and being spoken over in meetings. Neither category is a traditional Rule 1.6 identifier, and wasn’t on my radar to really address, but the combinatorial risk felt real enough for us to discuss. A small workplace, plus a specific gender dynamic, plus an approximate timeframe, is enough to identify a client to anyone who already knows the workplace and would make any client uncomfortable that amount of information was put out there. We all remember the day when ChatGPT users found out their chats were in Google search results — some lawyers got caught up in this, and although that issue has since been addressed, we can never be too careful with these generic tools!

Finding the Errors Under a Clock

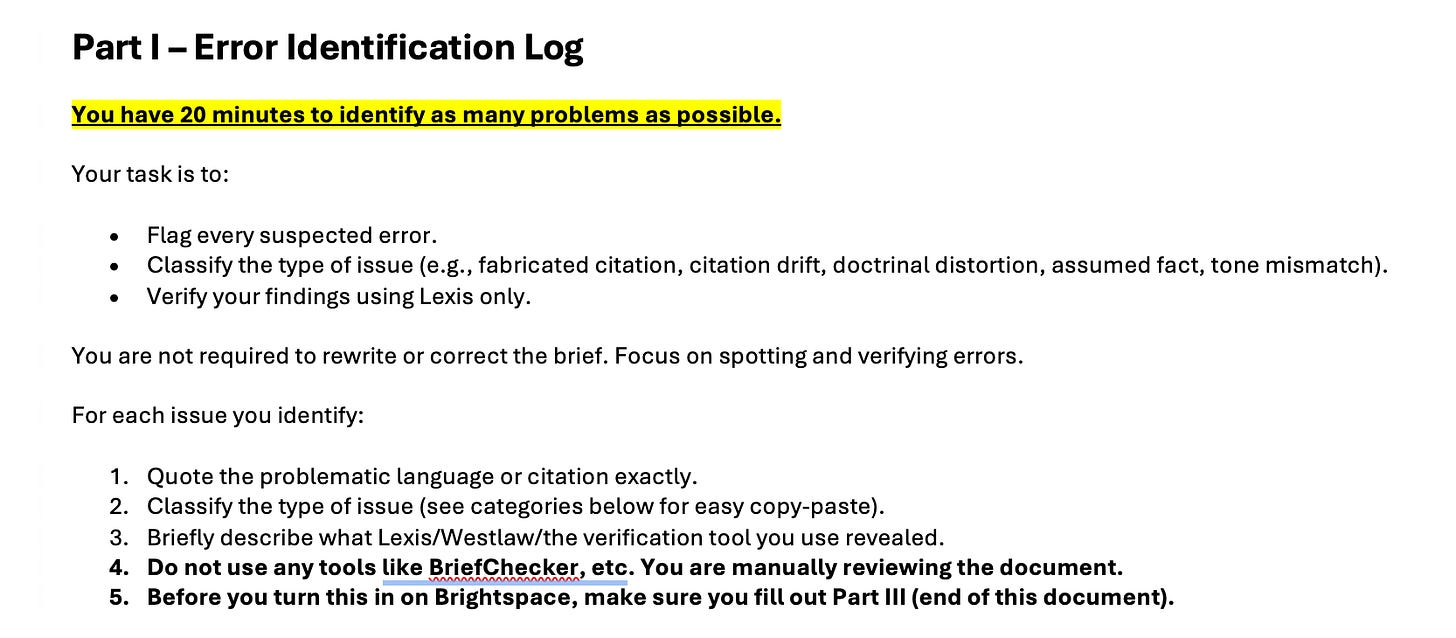

The Red Line Challenge gives students twenty minutes to find and correct every error in a brief that contains fabricated citations, hallucinated holdings, and a layer of subtler citation drift, doctrinal distortion, assumed facts, and tone problems. Students work alone, with only 20 minutes to complete the entire brief before “filing time”. The point is to make them feel the time pressure of real practice. The experience of trying to surface errors on a clock is what builds compassion for attorneys caught by AI hallucinations, because it makes the failure feel less like carelessness and more like an occupational hazard.

The brief contained five fabricated citations, five instances of citation drift in real cases, one doctrinal distortion, around five assumed facts unsupported by the record, and a handful of tone problems. The class average was about two fabrications and one drift incident caught per student. The best fabrication coverage in the class was four catches out of five. But, when I ran this brief through document analyzer tools, the tools caught all five fabrications and all five drift incidents but missed every doctrinal distortion, every assumed fact, and every tone issue.

What that gap tells you is the part I think really works. Students caught the errors that look like errors, like a citation that does not resolve, and missed the errors that look fine on the page: a real case quoted in a way that distorts its holding, a court characterized incorrectly, a procedural posture invented to fit the argument. They also did an excellent job with spotting what felt off or weird, which shows they are able to ‘gut check’ a document and apply heightened scrutiny when they suspect AI-generated work. Existing verification tools have the inverse issue. They catch what is mechanically wrong and miss what is doctrinally wrong. Neither approach is sufficient on its own, which is the real lesson of the exercise.

While the students definitely felt the pressure, the exercise was successful in actually getting them to check the work they were putting their names on. No student walked out of the exercise feeling confident that the brief was in good shape. Everyone wanted more time to work through the document, and most students wanted another person in the loop to double-check their work. This exercise served as a good reminder that is harder to surface in law school but becomes very obvious in practice: when your name is on the document, your reputation is on the line, even if there are others in the loop who you assume will or should catch any errors.

Holding the Lessons at the Firm Level

The Firm Policy assignment moves from individual evaluation to organizational decision-making. Students selected a firm profile and a practice, drafted a firm AI use policy, evaluated a specific AI tool against it, and arrived at an Adopt, Pilot, or Pass recommendation. The exercise asks them to hold the individual evaluation lessons at the firm level, where they have to start thinking about risk tolerance, supervision structure, and what kinds of error the firm can actually absorb.

Students were able to draw on their learning from the course to draft the policy with specific verification and privacy concerns in mind, often based on their summer practice experiences. Every group did this well — no one had a ‘bad’ or ineffectual policy — so what I found to be most interesting, in this context, was the range of outputs from the class.

One student group modeled a boutique firm and chose to adopt Claude as the sole approved tool. To address concerns of this tool not being ‘legal enough’ for the law firm setting,1 they built a four-component verification framework around it, naming citation accuracy, doctrinal accuracy, factual accuracy, and tone as the four checkpoints attorneys had to verify before using any output. Another group chose to represent a legal clinic and produced a policy that engaged directly with the tension between AI efficiency gains for high-volume work, the lack of staff to help in verification cycles, and the elevated stakes of errors in this specific type of representation. The tools they selected all received a pilot recommendation, and that was the right call: the clinic needs the efficiency, so some sort of adoption recommendation felt necessary, but they weren’t able to absorb any errors that could affect a client’s very tense and time-sensitive situation.

Another group did an excellent tool-specific analysis: their team applied their policy to Grammarly and ultimately rejected it on data-retention grounds, specifically its indefinite retention practices. That kind of analysis is exactly the move I want students to make before they are the ones reviewing and approving tools at a firm — they took their firm policy seriously and painstakingly analyzed it against their tool of choice. And they weren’t afraid to pass

Outside the Classroom

That is what these looked like in a for-credit setting, but each exercise took place in a single-week class, where, in the span of 1 hour and 15 minutes, I lectured and did the exercise. Talk about a time crunch!

But I think these exercises can be taken out of that for-credit world and applied more broadly. The Anonymization Audit could be a 45-minute session on confidentiality and AI tools, with the sample email distributed in advance and the debrief taking up most of time. It also works as something you can recommend to firms as an internal audit: have associates redact a sample email, surface what gets left in, and use the conversation to set baselines before approving a new tool or even allowing access to existing tools. It also would fit nicely into a conversation about shadow AI: I think it is a pretty convincing argument to use firm-approved tools when you show attorneys how much information they’d need to remove (and decreasing the utility of the other tools) to protect client data.

The Red Line Challenge drops cleanly into an existing course as a research methods refresher, a workshop or lunchtime session, or any setting where verification skills are the point. It works the same way at a firm: a session at a training day, or an exercise individual groups or partners can run with their new associates before handing over access to AI tools.

On the firm side, I think the Firm Policy Exercise is well-suited to a half-day workshop or a leadership program. A shorter version fits inside a workshop on AI adoption: you could distribute a few firm profiles, give attendees 30 minutes to draft the policy and a recommendation, and use comparisons across groups to make the point about contextual specificity. And you can always take an existing policy and see how groups apply it to different tools in the adopt/pilot/pass framework.

These exercises can be operated without a full course infrastructure or a commitment to a specific tool, and I’m confident there are more ways they can be adapted for training. Any generic chatbot will be a useful brainstorming partner for adapting any of them to your context. Format is the easier piece of this; deciding what kind of evaluation skill you want learners to walk out with is the harder one.

If you decide to use any of these in your teaching, adapt them for a different audience, or build something better, I would be glad to hear about it! Same for exercises you have developed that I have not seen. AI evaluation is going to be taught in a lot of different formats by a lot of different people, and the more we share what is working, the better the whole landscape gets.

And with all the connectors and skill releases as of late, I don’t think anyone will be thinking of Claude as not legal enough again!

This is an extremely useful framework! I think teaching the auditing of AI in legal work will be an essential role for new attorneys.